Motivation

We introduce CLAIRify: a novel approach that combines automatic iterative prompting with program verification to ensure programs written in domain-specific languages are syntactically valid and incorporate environment constraints.

Problems with using large language models for task-plan generation:

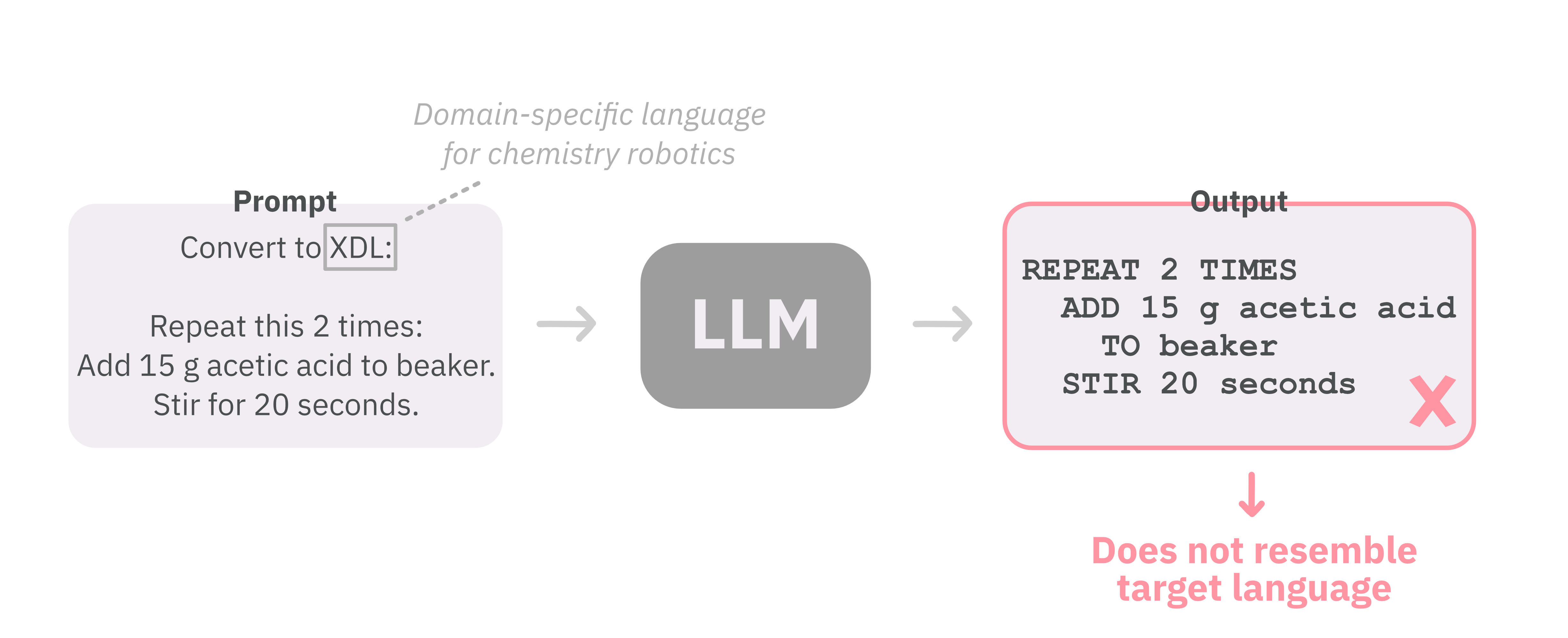

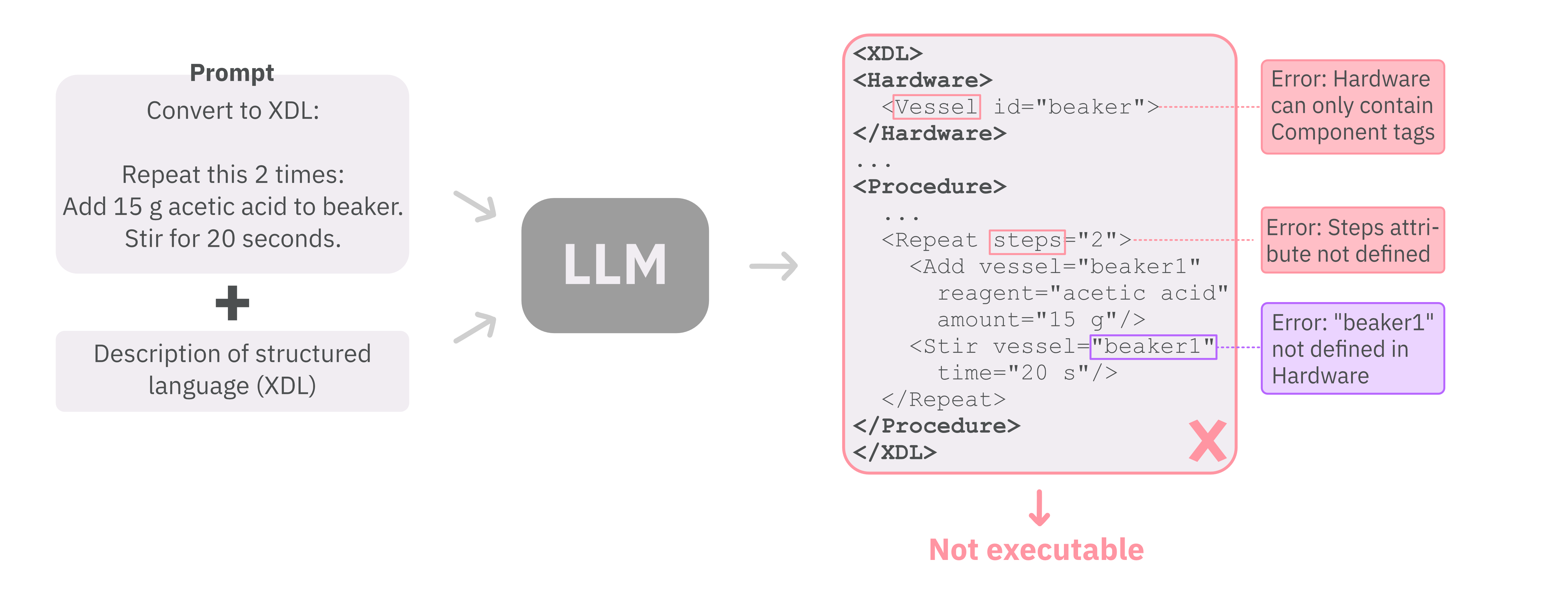

- Poor performance for data-scarce domain-specific languages (DSLs). We demonstrate that for XDL, a language used in robotics for chemistry lab automation, vanilla zero-shot prompting of LLMs produces erroneous task plans.

- Lack of task-plan verification. Generated task plans might not be executable by a robot because they are syntactically incorrect and/or incorporate resources not present in the environment.

Our solution: CLAIRify

CLAIRify is a framework that translates natural language into a domain-specific structured task plan using an automated iterative verification technique to ensure the plan is syntactically valid in the target DSL. It also takes into account environment constraints if provided.

Example of iterative prompting with CLAIRify

Here, we demonstrate an interaction between the generator and the verifier to generate a XDL task plan.

Results

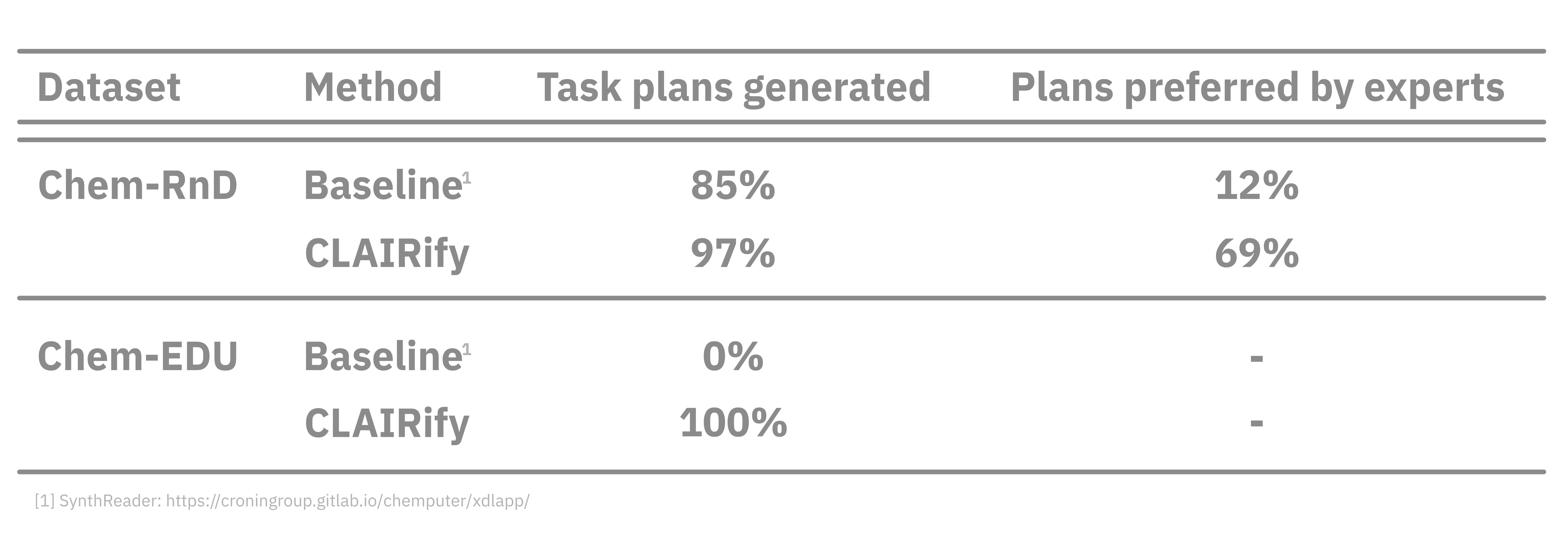

We show the performance of CLAIRify compared to a state-of-the-art baseline on two datasets we curated [1] Chem-RnD (organic chemistry synthesis protocols) and [2] Chem-EDU (household chemistry experiments exectuable by our robot). We report the number of plans successfully generated, as well as expert preference (given two anonymized plans for the same instruction, we asked experts to pick their preferred plan or classify them as equally good). The data can be accessed here.

Robot demo

To execute our generated plans on a real robot, we integrate our pipeline with a Task and Motion Planner. We show two robot experiments from the Chem-EDU dataset:

- pH experiment: mixing baking soda with red cabbage solution

- Food preparation: making lemonade